Introduction

Azure Functions and whole serverless approach have been in the market for a while, but year 2019 was crucial for me, since I\'ve been working with it a lot more than ever before.

My (back then) client was using Node.js (with TypeScript) in his system already, so we've followed this approach as well and used it along with Durable Functions (which are by the way great and I\'ll cover this topic in the future). However, due to our stack, it appeared to be a little headache after a while.

// Note: problem described in the article concerns every language but C# (according to documentation).

Test case

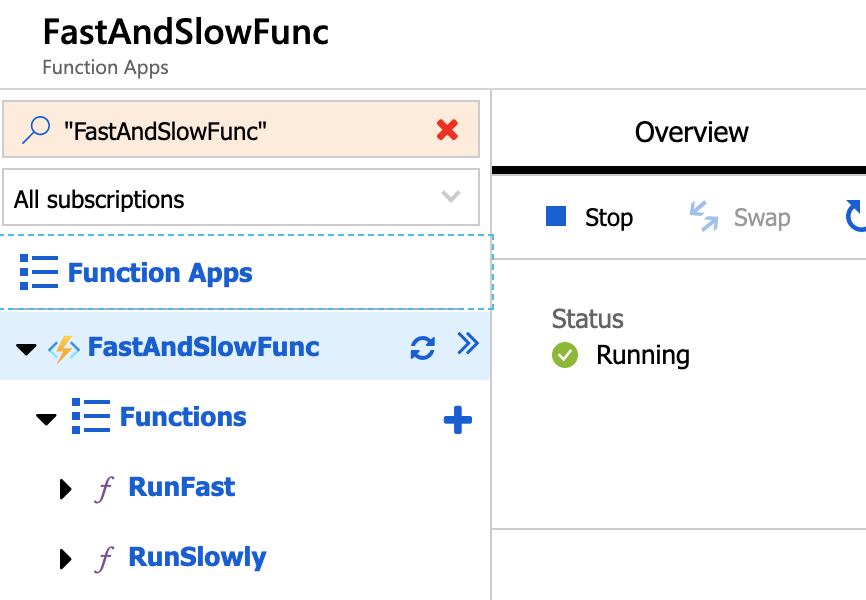

Small experiment: let\'s create two http triggered functions in Node.js. First function we\'ll name RunFast and the other one RunSlowly.

Description: For the sake of this experiment, I've created our functions directly via Azure Portal.

// Note: my production case was based on Azure Functions in Premium Plan which takes advantage of multiple cores on a single machine. However, the same case in Consumption Plan (with single core machines) appear to be performing better as well.

RunFast will just return a response instantly with this simple piece of code:

module.exports = async function (context, req) {

context.res = {

body: "Fast response",

};

};

While RunSlowly will be slowed down drastically by a while loop:

module.exports = async function (context, req) {

const maxElapsedTimeInMiliseconds = 10000; // 10 seconds

const timeStart = new Date().getTime();

while (true) {

const elapsedTime = new Date().getTime() - timeStart;

if (elapsedTime > maxElapsedTimeInMiliseconds) {

break;

}

}

context.res = {

body: "Slow response",

};

};

Results

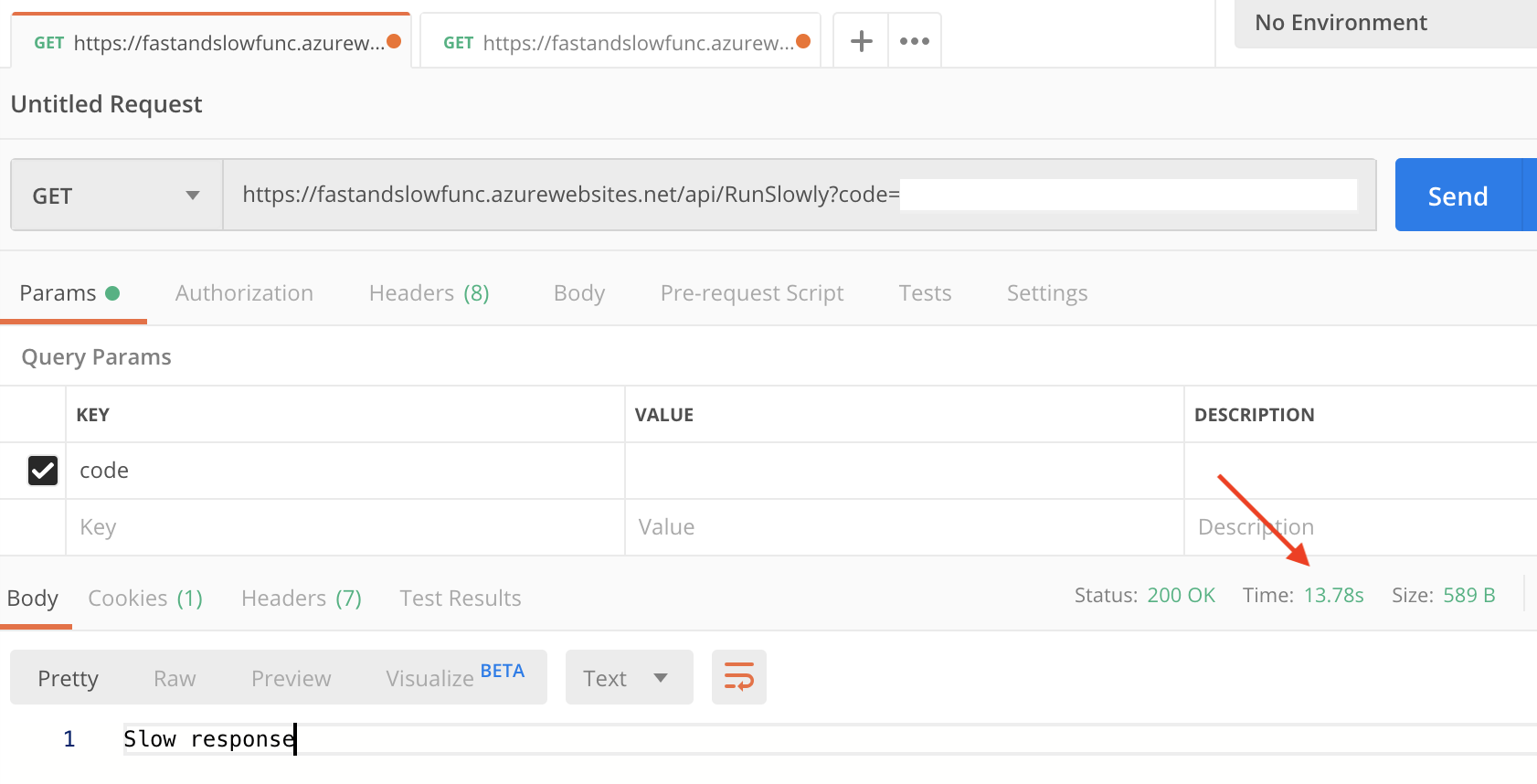

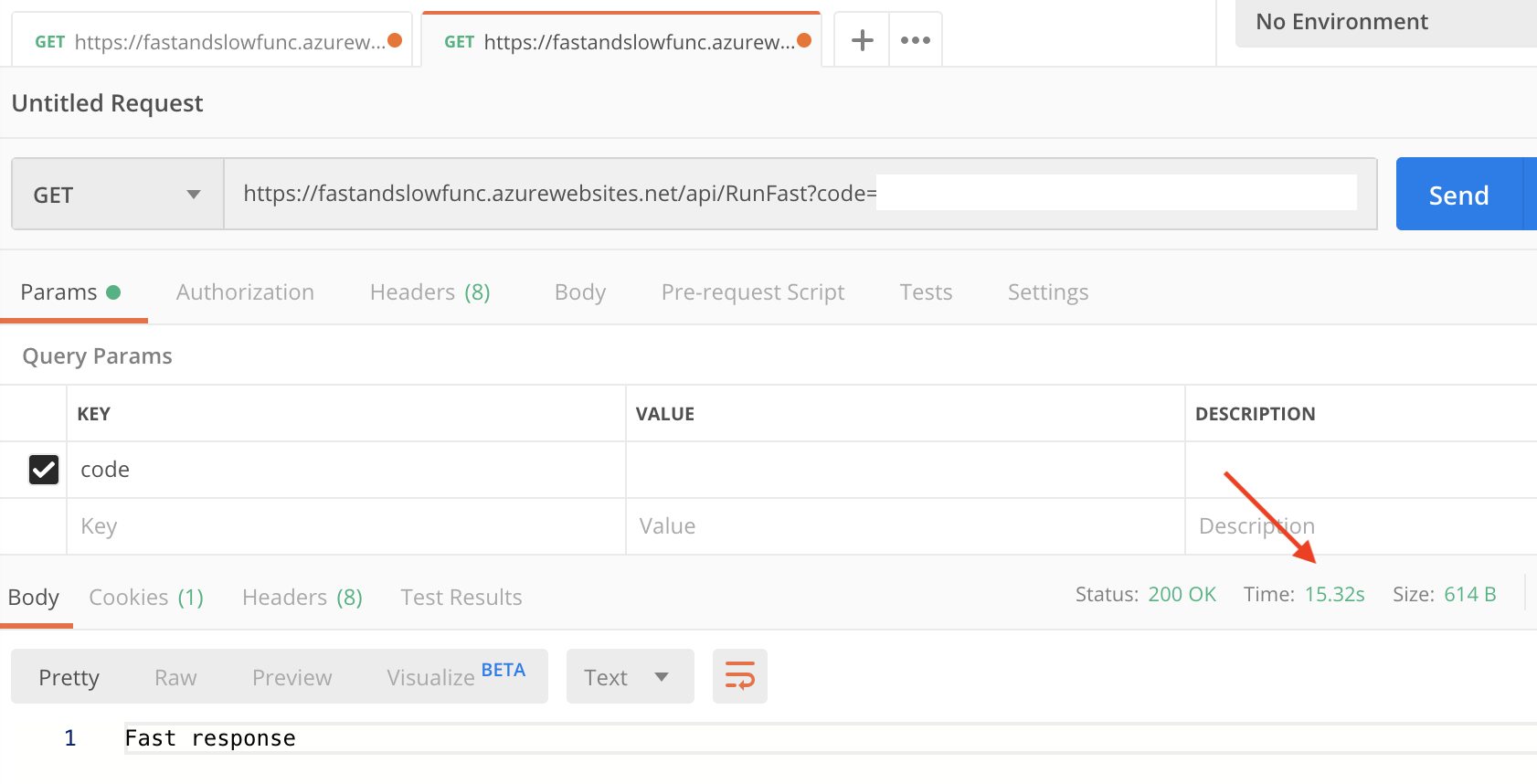

To test our newly created functions we\'ll use postman. Each test should be conducted after app restart or some time window in order to start with an empty set of machines to be reliable.

First, let\'s call RunFast multiple times concurrently. It seems like everything works smoothly - response time was relatively small: ~300 milliseconds on average (excluding cold start).

Now, let\'s try RunSlowly in the same manner. It takes way longer (in comparison to single run of the exact same function) than expected. First call will take a bit more than 10 seconds, but the second one will be blocked until the first one will finish. As a result, the first one took in my case about 11 seconds, while the second one returned the value within about 18 seconds!

Ok, so maybe a single run of RunSlowly and a single run of RunFast immediately after that?

Description: Result of RunSlow call. Response time: ~14 seconds.

Description: Result of RunFast call. Response time: ~16 seconds.

What we observe here is that RunFast has been waiting until RunSlowly will finish its job. Desipte RunFast was able to return the response in about 300 miliseconds in the first case, now it took over 15 seconds!

Ok. What exactly is going on in here?

First things first - a little bit of theory

How Azure Functions work

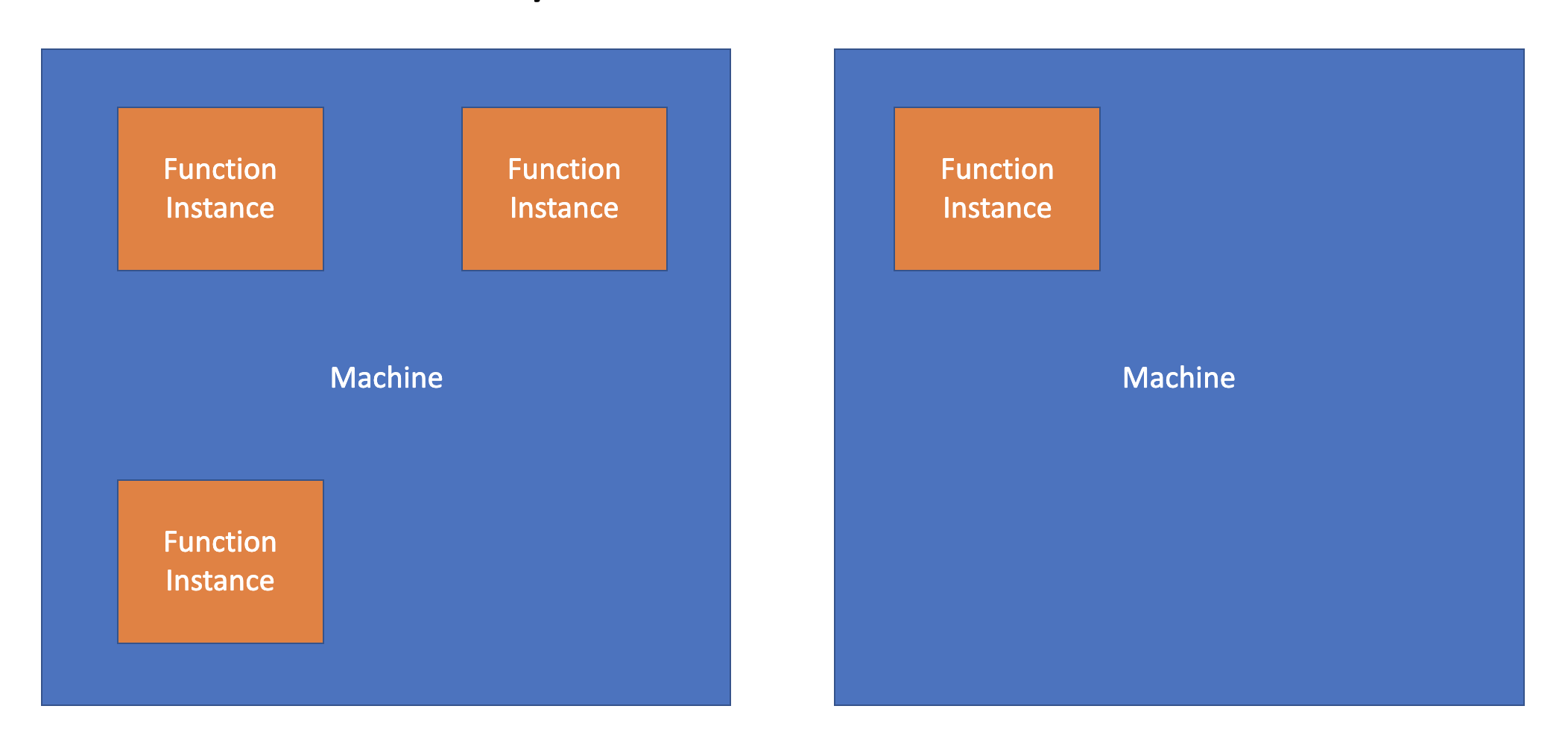

To reduce cold starts, Azure Functions are simply running multiple (up to 10 by default) instances of our code on the same machine underneath. Provisioning of machines takes some time, so in half of our app instances limit it starts to prepare another machine for us. Theoretically, by the time we'll want to exceed the limit of our instances on a single machine, the next one should be warmed up and ready to run a newly invoked function on it.

Description: Demonstration of how functions instances can be distributed on machines.

How Node.js works

When simplifying things a little bit, we can say that node.js is basically a single-threaded environment. It is supported by background threads to handle asynchronous operations (like I/O), so it runs smoothly in most of the popular cases, but it tends to have some hiccups if it comes to CPU-intensive operations.

How it all adds up

By this point you probably know what is going on. Every new instance of our functions is running in the same process (even if we call different functions, in the same service plan). On top of it, node.js architecture is deliberately not supporting (note: I\'m simplifying) parallel CPU-intensive tasks. This is why this blocking behaviour occurs.

If we would consider two asynchronous functions (like if our slower function would be just waiting for external service to respond - i.e. database or REST API), the very same test case would result in almost non-blocking runs.

Solution

This whole article could be just a single sentence:

Please set FUNCTIONS_WORKER_PROCESS_COUNT setting to the value of 10 in your Functions App Service if using any language other than C#.

However, if that would be one sentence, we would not be aware why exactly should we change the default value of that setting. FUNCTIONS_WORKER_PROCESS_COUNT simply sets the maximum number of processes that our functions runtime can invoke within a single machine. This results in a non-blocking node.js functions if set to 10 (which is a maximum available value at a time of writing).

Summary

Happily, we\'ve resolved our issue before any problems occurred in production and everybody lived long and prospered.

Lesson learned: always read whole documentation (or at least as much as possible) beforehand and do it precisely!

Azure Functions is a great piece of technology, but despite it is often repeated on conferences that we should not care about where and how it is running, we certainly should care to use it problem-less 😉

Raj

good article, describing the importance of functions_worker_process_count

Dawid Chróścielski

Thank you!

Yash

Thank you for this insight! Glad I decided to do some research on Azure Functions using JavaScript vs C#.

Dawid Chróścielski

I’m glad I could help! 🙂